The $80 Billion Problem Hiding in Plain Sight

AI voice agents are no longer experimental. In 2026, they are core business infrastructure. Gartner forecasts that conversational AI will cut global contact center labour costs by USD 80 billion this year alone, and the global voice AI agents market is projected to reach USD 47.5 billion by 2034, growing at a staggering 34.8 percent CAGR. Eighty percent of businesses now plan to integrate AI-driven voice technology into their customer service operations. The opportunity is enormous — but so is the failure rate.

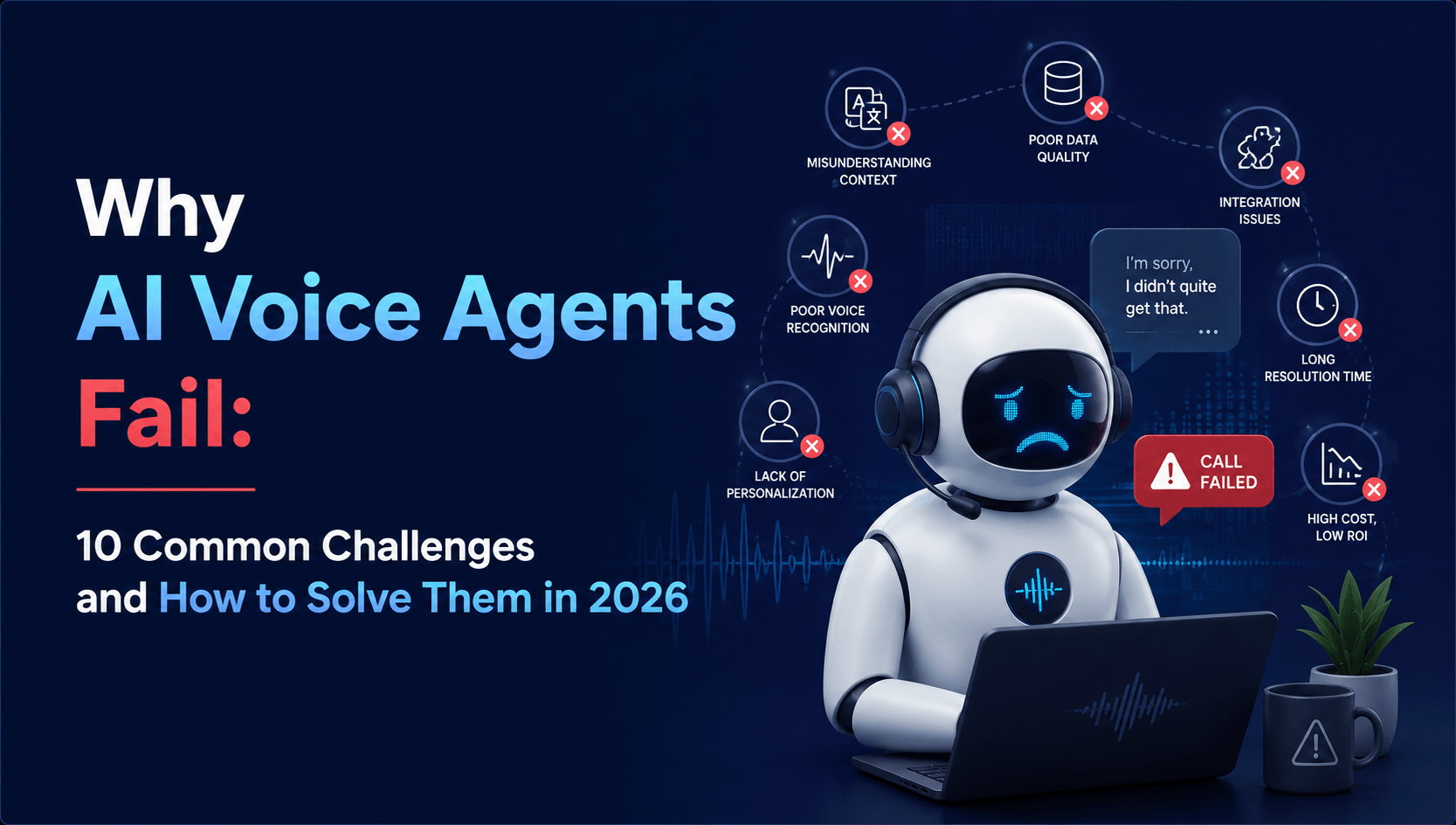

Here is the uncomfortable truth: 57 percent of failed AI initiatives stem from unrealistic expectations, and 38 percent from poor data quality, according to Gartner’s 2026 research. Production voice agent deployments grew 340 percent year-over-year, yet most businesses deploying voice AI encounter the same recurring failures — latency that frustrates callers, accent misrecognition that alienates customers, hallucinated responses that destroy trust, and security gaps that invite regulatory penalties.

The good news? Nearly every voice AI failure comes down to architecture, not model choice. Get the fundamentals right, and the same large language model that was struggling suddenly performs like a senior agent. In this guide, we break down the 10 most common reasons why AI voice agents fail and provide actionable, production-tested solutions for each one. Whether you are building your first voice agent or troubleshooting a stalled deployment, this is the checklist you need.

Quick Reference: 10 Challenges at a Glance

| # | Challenge | Key Solution |

| 1 | High Response Latency | Edge-deployed ASR + streaming TTS pipelines |

| 2 | Poor Accent & Dialect Recognition | Region-specific ASR models + custom phonetic lexicons |

| 3 | Background Noise Failures | Real-time noise suppression + confidence-based fallbacks |

| 4 | Hallucination & Incorrect Responses | RAG architecture + grounded knowledge bases |

| 5 | Interruption Handling (Barge-In) | Turn-taking detection with VAD + streaming partial results |

| 6 | Broken Escalation to Human Agents | Intent-based routing with sentiment-triggered handoff |

| 7 | Integration Failures with Backend Systems | API-first middleware layer with retry logic + circuit breakers |

| 8 | Security & Compliance Gaps | End-to-end encryption + PII redaction + SOC 2/HIPAA frameworks |

| 9 | Multilingual & Code-Switching Failures | Multilingual NLU models + language-detection switching layer |

| 10 | Unrealistic ROI Expectations | Phased deployment + measurable KPIs per stage |

Challenge 1: High Response Latency

Nothing kills a voice AI conversation faster than silence. A peer-reviewed ACM study found statistically significant degradation in user engagement, impression, and willingness to re-engage at just 4 seconds of response delay. In production environments, callers expect sub-second responses — anything beyond 1.5 seconds feels robotic and unnatural.

The latency problem compounds across the voice AI pipeline: speech-to-text (STT) processing, LLM inference, and text-to-speech (TTS) synthesis each add delay. When you stack these sequentially, total response time easily exceeds 3–4 seconds.

How to solve it: Deploy edge-optimised ASR (automatic speech recognition) models closer to the caller. Use streaming STT that begins processing audio in real-time rather than waiting for the caller to finish speaking. Implement streaming TTS that starts speaking the first sentence while the LLM is still generating the rest. The target benchmark for production voice agents in 2026 is approximately 600 milliseconds end-to-end latency. Architecting for this from day one prevents costly rework later.

Challenge 2: Poor Accent and Dialect Recognition

A voice agent that works perfectly in a demo with standard American English will crash in production when it encounters Australian accents, Indian English, regional British dialects, or non-native speakers. This is one of the most common reasons why AI voice agents fail in real-world deployments, particularly for businesses serving diverse markets across the USA, Australia, India, South Africa, and the UK.

Standard ASR models are typically trained on clean, accent-neutral datasets. They degrade significantly when exposed to regional pronunciation patterns, speech cadence variations, and phonetic differences.

How to solve it: Fine-tune ASR models with accent-specific training data from your actual customer base. Build custom phonetic lexicons for industry-specific terminology and regional expressions. Implement confidence-based fallbacks so the system asks for clarification rather than hallucinating around misrecognised input. For businesses operating across multiple geographies, working with an AI development partner experienced in multilingual NLP is critical.

Appther’s AI & ML Development Services include purpose-built NLP models trained for accent diversity and multilingual customer bases.

Challenge 3: Background Noise Failures

Lab benchmarks and production reality are worlds apart. An Interspeech study found that overlapping speech at moderate noise levels pushed transcription error rates to 74.6 percent — compared with 16.8 percent on clean audio. That is a 4.4x degradation. Background noise alone caused a 2x increase. Callers are often in cars, busy offices, shopping centres, or outdoor environments. If your voice agent cannot handle noise, it cannot serve real customers.

How to solve it: Implement real-time noise suppression and echo cancellation in the audio preprocessing layer before audio reaches the STT engine. Use beamforming techniques for directional audio capture. Deploy confidence-scored transcription where low-confidence segments trigger clarification prompts rather than silent failures. Always test your voice agent under production-like acoustic conditions — never accept accuracy claims based on studio-quality benchmarks.

Challenge 4: Hallucination and Incorrect Responses

When an AI voice agent confidently states incorrect information — a wrong account balance, an inaccurate policy detail, or a fabricated product feature — the damage to customer trust is immediate and often irreversible. Hallucination is especially dangerous in voice interactions because callers cannot easily verify information in real-time the way they can when reading text on a screen.

How to solve it: Implement a Retrieval-Augmented Generation (RAG) architecture that grounds every response in verified, real-time data from your knowledge base, CRM, or core business systems. Constrain the LLM’s response scope so it only answers questions within its authorised domain. Build explicit “I don’t know” pathways — a voice agent that honestly says “Let me transfer you to a specialist” is infinitely more valuable than one that confidently makes up an answer.

Appther builds enterprise voice AI with AI chatbot and conversational AI architectures that use RAG pipelines to eliminate hallucination in production.

Challenge 5: Poor Interruption Handling (Barge-In)

Humans naturally interrupt each other during conversations. They correct themselves mid-sentence, add context, or cut off a response they did not need. Most voice agents handle this poorly — either ignoring the interruption completely or resetting the entire conversation, forcing the caller to start over. This creates a frustrating, unnatural experience that drives callers to demand a human agent.

How to solve it: Implement Voice Activity Detection (VAD) with turn-taking logic that detects when a caller begins speaking and gracefully pauses the agent’s response. Use streaming partial transcription results to understand the interruption’s intent before deciding whether to stop, modify, or continue the current response. The conversation state must persist through interruptions — losing context on barge-in is a critical architecture flaw, not an LLM limitation.

Challenge 6: Broken Escalation to Human Agents

A voice agent that cannot recognise when it is out of its depth does more harm than no automation at all. The most damaging scenario is not a voice agent failing to answer a question — it is a voice agent that keeps trying when it should have transferred the call three exchanges ago. Frustrated callers who finally reach a human agent after a painful bot experience have already decided the company does not value their time.

How to solve it: Design explicit escalation triggers based on intent confidence scores, sentiment detection, and conversation loop detection. If the agent detects frustration (raised voice, repeated requests, negative language), it should immediately offer a human handoff — not attempt another scripted response. Build warm transfer protocols that pass full conversation context and transcript to the human agent, so the caller never has to repeat themselves. The escalation path is as important as the automation itself.

Challenge 7: Integration Failures with Backend Systems

A voice agent that can chat but cannot act is just a novelty. Real value comes from integration with core business systems — CRM platforms, ERP systems, payment gateways, appointment schedulers, and ticketing systems. Yet integration is where most production deployments break down. API timeouts, authentication failures, data format mismatches, and rate limiting cause the voice agent to freeze mid-conversation or return vague error responses.

How to solve it: Build an API-first middleware layer between the voice agent and your backend systems. Implement retry logic with exponential backoff, circuit breakers to prevent cascading failures, and graceful degradation paths that keep the conversation flowing even when a backend service is temporarily unavailable. The voice agent should always have a fallback response rather than going silent.

Appther’s AI product engineering team specialises in building robust integration layers that connect voice AI to enterprise backends including Salesforce, SAP, Oracle, Odoo, and custom platforms.

Challenge 8: Security and Compliance Gaps

Voice AI systems handle sensitive data by nature — names, account numbers, payment details, medical information. The security threats in 2026 are real and growing. Voice cloning attacks can impersonate customers or executives. Inaudible command injection can manipulate voice agents without the caller’s knowledge. Unintended recording and storage of voice data creates privacy liability under GDPR, HIPAA, CCPA, and Australia’s Privacy Act 2026 reforms.

How to solve it: Implement end-to-end encryption for all voice data in transit and at rest. Deploy real-time PII (personally identifiable information) redaction that automatically masks sensitive fields before storage. Use voiceprint verification rather than knowledge-based authentication for caller identity. Ensure your voice AI platform holds SOC 2 Type II certification, HIPAA BAA coverage for healthcare use cases, and GDPR-ready data processing agreements. Security must be designed into the architecture from day one, not bolted on after deployment.

Challenge 9: Multilingual and Code-Switching Failures

Businesses operating across multiple markets need voice agents that handle not just multiple languages but code-switching — when callers seamlessly switch between languages within the same conversation. A customer in Addis Ababa might switch between Amharic and English. A caller in Mumbai might blend Hindi and English. Standard voice agents trained on single-language datasets fail catastrophically in these scenarios.

How to solve it: Deploy multilingual NLU (natural language understanding) models that support language detection and switching at the utterance level. Train on code-switched conversational datasets that reflect how your actual customers speak. Build language-specific dialogue flows rather than translating a single English flow — translation is not localisation. For businesses targeting diverse markets like Australia, India, South Africa, and Ethiopia, this capability is not optional.

Appther has deep experience building multilingual conversational AI solutions through its AI chatbot development services, covering English, Hindi, Amharic, and other regional languages.

Challenge 10: Unrealistic ROI Expectations and Measurement Failures

The most common non-technical reason why AI voice agents fail is not a technology problem at all — it is a business expectations problem. Leadership teams often expect 90 percent automation rates within the first month, or assume the voice agent will immediately replace human agents entirely. When early results fall short of these unrealistic benchmarks, the project gets labelled a failure and budget gets pulled — often right before the system would have reached production maturity.

How to solve it: Adopt a phased deployment model with measurable KPIs at each stage. Start with the highest-volume, lowest-complexity use cases (FAQs, appointment scheduling, account balance inquiries) and expand scope incrementally. Define success metrics that reflect real business impact: containment rate (percentage of calls resolved without human intervention), average handling time reduction, customer satisfaction scores, cost per interaction, and escalation rate. A small service business deploying voice AI typically sees ROI breakeven within 3.2 months, with annual savings of USD 23,000 to 42,000 by automating receptionist functions alone.

The Deeper Pattern: Why Most Failures Are Architecture Problems, Not AI Problems

If there is a single takeaway from these 10 challenges, it is this: voice AI failures are almost never caused by the AI model itself. They are caused by the architecture around it — the pipeline design, the integration layer, the data quality, the conversation design, and the deployment strategy. A mediocre LLM wrapped in excellent architecture will outperform a state-of-the-art LLM wrapped in poor architecture every single time.

This is why choosing the right development partner matters more than choosing the right AI model. The partner who understands production-grade voice AI architecture, enterprise system integration, multilingual NLP, and iterative deployment methodology is the partner who will deliver a voice agent that actually works in the real world.

Frequently Asked Questions

How much does it cost to build an AI voice agent?

Costs vary significantly based on complexity. A basic FAQ voice agent starts from USD 15,000–25,000, while a full transactional voice agent with CRM integration, multilingual support, and compliance features ranges from USD 40,000–80,000. Per-minute operational costs in production run approximately USD 0.07–0.15 per minute, compared to USD 2.50–4.00 per minute for human agents.

What is a good containment rate for a voice agent?

Industry benchmarks in 2026 show that well-built voice agents achieve 60–80 percent containment rates for tier-one inquiries. Top performers reach 85–90 percent on structured use cases like appointment scheduling, balance inquiries, and order status checks. The key is to start with a focused scope and expand incrementally.

How long does it take to deploy a production voice agent?

A focused MVP targeting the top 20–30 use cases typically takes 8–12 weeks from discovery to production launch. Enterprise deployments with complex integrations, multilingual support, and compliance requirements range from 14–20 weeks. Continuous improvement and expansion are ongoing after launch.

Can AI voice agents handle emotional or frustrated callers?

Modern voice agents can detect emotional cues through sentiment analysis, speech pattern changes, and keyword detection. The best practice is not to attempt to “calm down” a frustrated caller through automation, but to immediately offer a warm transfer to a human agent with full conversation context. Emotional intelligence in voice AI means knowing when to step aside.

Which industries benefit most from AI voice agents?

Financial services (BFSI) leads adoption with 32.9 percent market share, followed by healthcare, retail, and telecommunications. Healthcare voice AI alone is projected to save USD 150 billion annually through appointment scheduling, symptom checking, and patient follow-up automation. Any industry with high call volumes and repetitive inquiry patterns sees significant ROI.

Conclusion: Build Voice AI That Works in the Real World

The voice AI market in 2026 is not waiting for businesses to figure this out. Conversational AI will reach USD 41.39 billion by 2030, and contact centres worldwide are saving billions by deploying intelligent voice agents today. The businesses that win are not the ones with the most advanced AI models — they are the ones that get the architecture, integration, and deployment strategy right.

Every challenge in this guide is solvable. High latency, poor accent recognition, hallucination, security gaps, integration failures, and unrealistic expectations — all of them have proven, production-tested solutions. The question is whether you have the right technology partner to implement them.